The Intergovernmental Panel on Climate Change (IPCC) is the premier international body collating the scientific assessment of climate change, and proposals for mitigation. A joint creation of the United Nations agencies the World Meteorological Organization (WMO) and the United Nations Environment Programme (UNEP), it brings together scientists from myriad disciplines to assess and summarize the current research on climate change, collating knowledge that is then used to inform governments and politicians. The scientists work on a volunteer basis.

The IPCC relies upon its member governments and “Observers Organizations” to nominate its volunteer authors. This means that, subject to their willingness to volunteer, the most prestigious individuals specialising in climate change in each discipline become the authors of the relevant IPCC chapter for their discipline. They then undertake a review of the peer-reviewed literature in their field (and some non-peer-reviewed work, such as government reports) to distil the current state of knowledge about climate change in their discipline. A laborious review process is also followed, so the draft reports of the volunteer experts is reviewed by other experts in each field, to ensure conformity of the report with the discipline’s current perception of climate change. The emphasis upon producing reports which reflect the consensus within a discipline has resulted in numerous charges that the IPCC’s warnings are inherently too conservative (Herrando-Pérez et al., 2019, Brysse et al., 2013).

Figure 1: The IPCC’s graphic laying out the publication and review process behind its reports

But the main weaknesses with the IPCC’s methodology are firstly that, in economics, it exclusively selects Neoclassical economists, and secondly, because there is no built-in review of one discipline’s findings by another, the conclusions of these Neoclassical economists about the dangers of climate change are reviewed only by other Neoclassical economists. The economic sections of IPCC reports are therefore unchallenged by other disciplines who also contribute to the IPCC’s reports.

Given the extent to which economists dominate the formation of most government policies in almost all fields, and not just strictly economic policy (Fourcade et al., 2015, Hirschman and Berman, 2014, Christensen, 2018, Lazear, 2000), the otherwise acceptable process by which the IPCC collates human knowledge on climate change has critically weakened, rather than strengthened, human society’s response to climate change. This is because, commencing with “Nobel Laureate” (Mirowski, 2020) William Nordhaus, the economists who specialise on climate change have falsely trivialized the dangers that climate change poses to human civilization.

Nobel Oblige

In his 2018 Nobel Prize lecture, William Nordhaus described a trajectory that would lead to global temperatures peaking at 4°C above pre-industrial levels in 2145 as “optimal” (Nordhaus, 2018a, Slide 6) because, according to his calculations, the damages from climate change over time, plus the abatement costs over time, are minimised on this trajectory. He estimated the discounted cost of the economic damages from unabated climate change — which would see temperatures approach 6°C above pre-industrial levels by 2150 — at $24 trillion, whereas the 4°C trajectory had damages of about $15 trillion and abatement costs of about $3 trillion. Trajectories with lower peak temperatures had higher abatement costs that overwhelmed the benefits (Nordhaus, 2018a, Slide 7). In a subsequent paper, Nordhaus claimed that even a 6°C increase would only reduce global income by only 7.9%, compared to what it would be in the complete absence of global warming.

This sanguine assessment of the costs of climate change contrasts starkly with the non-economic sections of IPCC reports. The recent Global Warming of 1.5°C Report, for example, predicted that 70% of insects and 40–60% of mammals would lose 50% or more of their range at 4.5°C (Warren et al., 2018, p. 792). Yet the economic components of IPCC reports concur with Nordhaus that damages from climate change will be slight: the Executive Summary of Chapter 10 of the 2014 Fifth Assessment, “Key Economic Sectors and Services”, opens with the declaration that:

For most economic sectors, the impact of climate change will be small relative to the impacts of other drivers (medium evidence, high agreement). Changes in population, age, income, technology, relative prices, lifestyle, regulation, governance, and many other aspects of socioeconomic development will have an impact on the supply and demand of economic goods and services that is large relative to the impact of climate change. (Arent et al., 2014a, p. 662)

How can such relatively small estimates of economic damages be reconciled with the large impacts that scientists expect on critical components of the biosphere? The answer is that they can’t, because the economic studies are not based on the scientific assessments of damage from climate change. Instead, the numerical estimates of the impact of climate change on GDP have been made up by economists themselves. I use the expression “made up” advisedly, because there is no more accurate way to characterise how Neoclassical economists have approached climate change. Before I explain how these spurious estimates were manufactured, it is useful to contrast them with some of the more easily understood dangerous consequences of a higher global average temperature.

The scientific assessment

Using Nordhaus’s sanguine prediction of a mere 7.9% reduction in global income from a 6°C increase (Nordhaus, 2018b, p. 345) as a reference point, three of the most obvious threats of a 6°C warmer world are the impact of these temperatures on human physiology, on the survival of other animals, and on the structure of the Earth’s atmospheric circulation systems.

A critical feature of human physiology is our ability to dissipate internal heat by perspiration. To do so, the external air needs to be colder than our ideal body temperature of about 37°C, and dry enough to absorb our perspiration as well. This becomes impossible when the combination of heat and humidity, known as the “wet bulb temperature”, exceeds 35°C. Above this level, we are unable to dissipate the heat generated by our bodies, and the accumulated heat will kill a healthy individual within three hours. Scientists have estimated that a 3.8°C increase in the global average temperature would make Jakarta’s temperature and humidity combination permanently fatal for humans, while a 5.5°C increase would mean that even New York would experience 55 days per year when the combination of temperature and humidity would be deadly (Mora et al., 2017, Figure 4, p. 504).

Temperature also affects the viable range for all biological organisms on the planet. Scientists have estimated that a 4.5°C increase in global temperatures would reduce the area of the planet in which life could exist by 40% or more, with the decline in the liveable area of the planet ranging from a minimum of 30% for mammals to a maximum of 80% for insects (Warren et al., 2018, Figure 1, p. 792).

The Earth’s current climate has three major air circulation systems in each hemisphere: a “Hadley Cell” between the Equator and 30°, a mid-latitude cell between 30° and 60°, and a Polar cell between 60° and 90°. This structure is why there is such large differences in temperature between the tropics, temperate and arctic regions, and relatively small differences within each region. Scientists have modelled the stability of these cells and concluded that they could be tipped, by an average global temperature increase of 4.3°C or more, into a state with just one cell in the Northern Hemisphere — and an average Arctic temperature of 22.5°C. This abrupt transition (known as a bifurcation), sometime in the next century would disrupt agriculture, plant, animal and human life across the planet, and would occur far too quickly for any meaningful adaptation, by natural and human systems alike (Kypke et al., 2020, Figure 6 p. 399).

These three factors and many others, caused not only by industry’s CO2 emissions but also by the myriad other ways we damage the biosphere, would occur together, and interact with each other, at temperature levels of 4–6°C above pre-industrial levels. Nordhaus’s assertion that such devastating changes to the Earth’s climate would reduce global GDP by less than 8%, compared to a world without climate change (Nordhaus, 2018b, p. 345), is simply risible. How could he arrive at such an absurd conclusion?

In common with most of my peers in non-Neoclassical economics, I initially assumed that the answer was that he applied far too high a “discount rate” to future damages (Hickel, 2018). If you think that 99% of the economy would be destroyed in a century from now by the catastrophic effects of a 6°C increase in temperatures, but discount that back to today’s world at a rate of 7% p.a., you get the result that this collapse in future GDP is worth only 0.1% of today’s GDP — which is no big deal.

If this had been how Nordhaus had arrived at such low damage estimate, then the high discount rate could be challenged, but the rest of his analysis could potentially be sound. But our guess was wrong. Nordhaus explained why he used a high discount rate when he strongly criticised the lower discount rate used by the Stern Review (Stern, 2007). It was not to reduce future catastrophic damages to trivial levels now, but because, if he used a low discount rate, then:

the relatively small damages in the next two centuries get overwhelmed by the high damages over the centuries and millennia that follow 2200. (Nordhaus, 2007, p. 202. Emphasis added)

“Relatively small damages in the next two centuries”? How on Earth did he reach that conclusion? I found out, to my disgust, that he and his colleagues ignored or distorted the work of scientists, and instead made up their own trivial estimates of the economic damage from climate change. I have spent fifty years of my life being a critic of Neoclassical economics. Neoclassical work on climate change is by far the lowest grade work that I have read in that half-century.

Reading Catastrophe and Seeing Utopia

I am accustomed to shaking my head in wonder at the capacity of Neoclassical economists to come up with ludicrous assumptions to jump over some logical impasse, like this gem by the developer of the Capital Assets Pricing Model, William Sharpe. After meticulously deriving a model of an individual “rational” investor, Sharpe then proceeded to model the entire market by assuming:

homogeneity of investor expectations: investors are assumed to agree on the prospects of various investments — the expected values, standard deviations and correlation coefficients described in Part II. (Sharpe, 1964, pp. 433–34, Fama and French, 2004, p. 26)

This is patently absurd. But at least Sharpe conceded as much, when he attempted to justify it via a clumsy rendition of Milton Friedman’s dodgy methodological defence of absurd assumptions (Friedman, 1953). Fama, who led the empirical defence of this theory (Fama, 1970, Fama and French, 1996, Fama and French, 2004), fitted it to actual stock market data, which for a time appeared to support the theory.

But in this work on climate change, Neoclassical economists have fitted their absurd-assumptions models to their own manifestly absurd made-up data. When they consulted scientists or referenced scientific literature, they frequently distorted the research or drowned the warnings of scientists with the blasé expectations of economists. This was nowhere more evident than in Nordhaus’s treatment of a key paper on the likelihood that global warming will trigger “tipping points” that cause runaway climate change, “Tipping elements in the Earth’s climate system” (Lenton et al., 2008).

Lenton’s paper considered whether there were large-scale elements of Earth’s climactic system which could be triggered into a qualitative change that would in turn rapidly alter the climate. He and his co-authors identified nine such systems (all but one of which — the Indian Monsoon — could be tipped by temperature increases alone), after applying the conditions that these systems had to be “subsystems of the Earth system that are at least subcontinental in scale” which could be “switched — under certain circumstances — into a qualitatively different state by small perturbations”. They excluded “systems in which any threshold appears inaccessible this century” (Lenton et al., 2008, pp. 1786–87).

If “Tipping elements” exist, and if they can be triggered by a temperature rise that can be expected from Global Warming this century, and if they would have drastic impacts upon the global climate, then the only prudent thing to do is to avoid such a temperature rise in the first place. If that rise occurs and a climactic element is tipped, it will unleash forces that will be far too large for human action to reverse. A qualitatively different climate would result, whose consequences for human civilisation cannot be predicted by extrapolating from what we know about our current climate.

Lenton and his co-authors identified two definite candidates for a tipping point this century — “Arctic sea-ice and the Greenland ice sheet” — and noted that “at least five other elements could surprise us by exhibiting a nearby tipping point” (Lenton et al., 2008, p. 1792) before 2100. They could not at the time specify a critical value for the temperature at which the decline in Arctic summer sea-ice would tip the Arctic from being a reflector of solar energy to an absorber, but noted that “a summer ice-loss threshold, if not already passed, may be very close and a transition could occur well within this century” (Lenton et al., 2008, p. 1789).

Nordhaus “summarised” this research with the sentence:

“Their review finds no critical tipping elements with a time horizon less than 300 years until global temperatures have increased by at least 3°C” (Nordhaus, 2013a, p. 60)

This is a total fabrication. Lenton’s careful definition itself contradicts Nordhaus’s alleged summary, by explicitly excluding systems which could not be triggered this century. Nordhaus’s claim that they found no systems which could be triggered in the next three centuries is false: they identified at least two, and possibly seven (out of eight!), which could be — though the process of transiting from their current to final states could take several centuries. Nordhaus claimed that the minimum temperature rise that could trigger a “critical tipping element” was 3°C: they said that Arctic summer sea-ice could be triggered with a rise of as little as 0.5°C — a level we have already well and truly exceeded.

There are few — if any — scientific or economic issues that are more important than this. It deserves the closest possible attention. And yet, if an undergraduate student of mine had summarised this paper as Nordhaus did, I would have failed him. However, since I was reading the work of a “Nobel Prize winner”, rather than an unsatisfactory undergraduate, I found myself having to do forensic research to work how on Earth Nordhaus could have reached his interpretation of this paper — which explicitly warned against using “smooth projections of global change”, and which explicitly warned of a likely tipping point in the Arctic — as supporting him trivialising the significance of losing Arctic summer sea-ice, and using a “damage function” that ignored tipping points (see Table 1).

Table 1: The chasm between Lenton’s conclusion and Nordhaus’s interpretation of this research

The only feasible explanation for Nordhaus’s erroneous summary was a table by Lenton in a related publication (Richardson et al., 2011), which Nordhaus also referenced as a source for his interpretation (Nordhaus, 2013b, p. 334). Lenton described this table as “A simple ‘straw man’ example of tipping element risk assessment”. Each “tipping element” was given a point score in terms of likelihood of occurring this century (Low=1, Medium=1.5, Medium-High=2.5, and High=3) and relative impact on the climate over the next millennium (Low=1, Low-Medium=1.5, Medium=2, Medium-High=2.5, and High=3). A risk score was derived as the product of likelihood times impact. Arctic summer sea-ice had the lowest rating on impact (Low=1), but the highest in terms of likelihood (High=3), for an overall score of 3 — see Table 2, which ranks these tipping elements in the descending order of importance that Nordhaus gave them in his table N.1 (Nordhaus, 2013b, p. 333).

Table 2: Based on Lenton’s Table “A simple ‘straw man’ example of tipping element risk assessment, by Timothy M. Lenton” in (Richardson et al., 2011, p. 186), and Nordhaus’s Table N-1, with his ranking of tipping elements used to sort the table (Nordhaus, 2013b, p. 333)

It appears that Nordhaus’s relative ranking was based primarily on Lenton’s “Impact” measure, since he ranked Arctic summer sea-ice as the lowest (one star in his Table N.1, or a low ranking of 3 in Table 2), below disrupting the Atlantic Thermohaline Circulation (THC) for example (two stars, or a medium ranking of 2), whereas Lenton ranked Arctic sea-ice above the THC in risk assessment terms (3 versus 2.5, where a higher score is worse) because of its much higher likelihood of occurring this century.

This may explain how Nordhaus came to classify losing the Arctic summer sea-ice as an event of low concern in climate change. But this is a false reading of Lenton’s table. As Lenton explained, “Impacts are considered in relative terms based on an initial subjective judgment (noting that most tipping-point impacts, if placed on an absolute scale compared to other climate eventualities, would be high)” (Richardson et al., 2011, p. 186. Emphasis added). In other words, while the impact of the loss of Arctic summer sea-ice was low compared to, for example, the impact of losing the Atlantic Thermohaline Circulation (THC), it was not low in any absolute sense: losing the Arctic’s summer sea-ice would have a significant qualitative impact on the climate. Nordhaus’s interpretation of Lenton’s “low” ranking as meaning that Arctic summer sea-ice was not of absolute importance to the climate — it was not “critical”, he alleged — is a fundamentally incorrect reading of Lenton’s research, as Lenton confirmed to me in personal correspondence (Keen and Lenton, 2020).

Nordhaus also misinterpreted the time ranges given (the “Transition Timescale” column in Table 2) as indicating that these tipping elements were not going to be triggered for that many years, when in fact they were an estimate of how long it would take from an initial triggering this century until the end of the transition process. The complete melting of the West Antarctic ice sheet might well take 300 years from its initial triggering, whereas the Arctic summer sea-ice could disappear over a period measured in decades rather than centuries. But the fate of the West Antarctic ice sheet would be decided this century, if we let increased CO2 levels drive up global temperature by 3°C or more — which we are well on track to do, and which we would reach on Nordhaus’s “optimum” trajectory by 2085.

In summary, Nordhaus read Lenton’s research as a climate change denier would, cherry picking it to find ways to support his preconception that climate change was insignificant. This was a consistent theme in Nordhaus’s treated scientific research on climate change, as evidenced by surveys he undertook of scientists in 1994 (Nordhaus, 1994), and scientific literature in 2017 (Nordhaus and Moffat, 2017).

Drowning Scientists with Economists

Nordhaus’s 1994 survey asked people from various academic backgrounds to give their estimates of the impact on GDP of three global warming scenarios, including a 3°C rise by 2090. The 2014 IPCC Report used this as one data point in Figure 10.1 (see Figure 2), claiming that a 3°C temperature rise would cause a 3.6% fall in GDP.

Nordhaus’s surveyed 19 people, 18 of whom fully complied, and one partially. Nordhaus described them as including 10 economists, 4 “other social scientists”, and 5 “natural scientists and engineers”, noting that eight of the economists come from “other subdisciplines of economics (those whose principal concerns lie outside environmental economics)” (Nordhaus, 1994, p. 48). This, ipso facto, should rule them out from taking part in this expert survey in the first place.

There was extensive disagreement between the relatively tiny cohort of actual scientists surveyed, and, in particular, the economists “whose principal concerns lie outside environmental economics”. As Nordhaus noted, “Natural scientists’ estimates [of the damages from climate change] were 20 to 30 times higher than mainstream economists’” (Nordhaus, 1994, p. 49). The average estimate by “Non-environmental economists” (Nordhaus, 1994, Figure 4, p. 49) of the damages to GDP a 3°C rise by 2090 was 0.4% of GDP; the average for natural scientists was 12.3%, and this was with one of them refusing to answer Nordhaus’s key questions:

“I must tell you that I marvel that economists are willing to make quantitative estimates of economic consequences of climate change where the only measures available are estimates of global surface average increases in temperature. As [one] who has spent his career worrying about the vagaries of the dynamics of the atmosphere, I marvel that they can translate a single global number, an extremely poor surrogate for a description of the climatic conditions, into quantitative estimates of impacts of global economic conditions.” (Nordhaus, 1994, pp. 50–51)

Comments from economists lay at the other end of the spectrum from this self-absented scientist. Because they had a strong belief in the ability of human economies to adapt, they could not imagine that climate change could do significant damage to the economy, whatever it might do to the biosphere itself:

There is a clear difference in outlook among the respondents, depending on their assumptions about the ability of society to adapt to climatic changes. One was concerned that society’s response to the approaching millennium would be akin to that prevalent during the Dark Ages, whereas another respondent held that the degree of adaptability of human economies is so high that for most of the scenarios the impact of global warming would be “essentially zero”. (Nordhaus, 1994, pp. 48–49. Emphasis added)

Given this extreme divergence of opinion between economists and scientists, one might expect that Nordhaus’s next survey would examine the reasons for it. In fact, the opposite applied: his methodology excluded non-economists entirely.

Rather than a survey of experts, this was a literature survey (Nordhaus and Moffat, 2017). He and his co-author searched for relevant articles using the string “”(damage OR impact) AND climate AND cost” (Nordhaus and Moffat, 2017, p. 7), which is reasonable, if too broad (as they admit in the paper).

The key flaw in this research was where they looked: they executed their search string in Google, which returned 64 million results, Google Scholar, which returned 2.8 million, and the economics-specific database Econlit, which returned just 1700 studies. On the grounds that there were too many results in Google and Google Scholar, they ignored those results, and simply surveyed the 1700 articles in Econlit (Nordhaus and Moffat, 2017, p. 7). These are, almost exclusively, articles written by economists. They did not search a comparable science database like ProQuest Science Journals, where the same too-broad search string (on January 11th 2021) returned 60,315 peer-reviewed full-text articles, and a narrower search string “damage AND climate AND gdp” returned a manageable 2,721 hits.

There is therefore no significant science-based content in the papers that generated the “data” on which IPCC economists concluded that “the impact of climate change will be small relative to the impacts of other drivers” (Arent et al., 2014a, p. 662). All of the pairs of numbers in Figure 2 were generated by economists, and all but one predict an extremely small impact on GDP from global warming, of a less than 3% fall in GDP from temperature rises of up to 3°C (or a 6% fall for a 5.5°C rise), compared to what GDP would be in the complete absence of climate change.

Figure 2: IPCC economic estimates of damages to GDP from global warming (Arent et al., 2014a, p. 690)

These numbers were generated in two main ways, which the IPCC report described as “Enumeration” and “Statistical” (Arent et al., 2014b, Table SM10.2, p. SM10–4). Enumeration added up estimates of damages to industries from climate change, under the assumption that only activities exposed to the weather would be affected; the “statistical” method used the weak correlation between average temperature and average income today as a proxy for the impact of climate change over time.

Equating Climate with Weather

Nordhaus’s first predictions of the economic consequences of climate change in a refereed journal — the Economic Journal — came in 1991. This paper, entitled “To Slow or Not to Slow: The Economics of The Greenhouse Effect” (Nordhaus, 1991) contained the seeds of all his future work on climate change. He equated climate change over time, due to dramatically increasing the amount of solar radiation retained in the biosphere as heat by increased greenhouse gases, with the geographic variation of today’s climate across the globe:

human societies thrive in a wide variety of climatic zones. For the bulk of economic activity, non-climate variables like labour skills, access to markets, or technology swamp climatic considerations in determining economic efficiency. (Nordhaus, 1991, p. 930)

He assumed that climate change would only affect economic activities that were directly exposed to the weather:

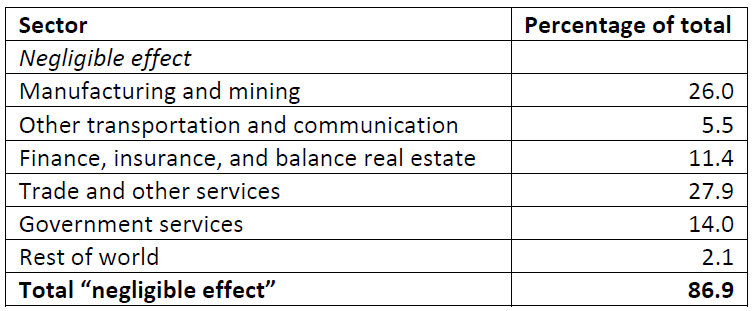

The most sensitive sectors are likely to be those, such as agriculture and forestry, in which output depends in a significant way upon climatic variables… Our estimate is that approximately 3% of United States national output is produced in highly sensitive sectors, another 10% in moderately sensitive sectors, and about 87 % in sectors that are negligibly affected by climate change. (Nordhaus, 1991, Table 5, p. 930. Emphasis added)

Table 3: Extract from Nordhaus’s breakdown of economic activity by vulnerability to climatic change (Nordhaus, 1991, p. 931)

Using these beliefs, he derived trivial estimates for the impact of climate change on the economy:

damage from a 3°C warming is likely to be around ¼% of national income in United States … We might raise the number to around 1% of total global income to allow for these unmeasured and unquantifiable factors, although such an adjustment is purely ad hoc… my hunch is that the overall impact upon human activity is unlikely to be larger than 2% of total output. (Nordhaus, 1991, p. 933)

All subsequent papers by Neoclassical climate-change economists replicated the assumption that any activity not directly exposed to the weather would be immune from climate change. The 2014 IPCC Report restated it as a “Frequently Asked Question”:

FAQ 10.3 | Are other economic sectors vulnerable to climate change too?Economic activities such as agriculture, forestry, fisheries, and mining are exposed to the weather and thus vulnerable to climate change. Other economic activities, such as manufacturing and services, largely take place in controlled environments and are not really exposed to climate change. (Arent et al., 2014a, p. 688. Emphasis added)

Equating Time with Space

Nordhaus’s colleague Robert Mendelsohn (Mendelsohn et al., 1994, Mendelsohn et al., 2000) used Nordhaus’s assumption that today’s climate and GDP data was relevant to climate change to invent another way to predict the impact of global warming from today’s data:

An alternative approach … can be called the statistical approach. It is based on direct estimates of the welfare impacts, using observed variations (across space within a single country) in prices and expenditures to discern the effect of climate. Mendelsohn assumes that the observed variation of economic activity with climate over space holds over time as well; and uses climate models to estimate the future effect of climate change. (Tol, 2009, p. 32. Emphasis added)

This method of generating numbers takes average temperature data and per capita income data, and uses the weak correlation between them to allege that climate change will be relatively trivial. Figure 3 shows temperature and per capita income for the contiguous United States on a State-by-State basis (“Gross State Product per capita”, or GSPPC).

Figure 3: Correlation of temperature and USA Gross State Product per capita

There is no real pattern, but a quadratic can be fitted to the data as shown, with a low correlation coefficient of 0.31. In statistical terms, this means that this function has terrible predictive power. For example, it predicts that States which are 4°C hotter or colder than the USA average will have a GSPPC that is 5% lower. But the states that are between 3.5°C and 4.5°C above or below the USA’s average temperatures include New York at 30% above the average, and Arkansas at 29% below. If you were trying to win a game of Trivial Pursuit, you wouldn’t use this function to supply your answers on US GSP per capita today.

Trivial estimates of serious damages

And yet Nordhaus uses a quadratic, derived from data much like that in Figure 3, but with an even smaller coefficient, to “predict” the impact of Global Warming on “Gross Global Product” (GGP). The equation of the quadratic in Figure 3 is . Nordhaus’s “damage function”, in the latest version of his global warming model DICE (for “Dynamic Integrated Climate and Economics”), is :

The parameter used in the model was an equation with a parameter of 0.227 percent loss in global income per degrees Celsius squared with no linear term. This leads to a damage of 2.0 percent of income at 3°C, and 7.9 percent of global income at a global temperature rise of 6°C. (Nordhaus, 2018b, p. 345)

These predictions are absurd. A 3°C increase could trigger, and a 6°C increase would trigger, every “tipping element” shown in Table 2. The Earth would have a climate unlike anything our species has experienced in its existence, and the Earth would transition to it hundreds of times faster than it has in any previous naturally-driven global warming event (McNeall et al., 2011). The Tropics and much of the globe’s temperate zone would be uninhabitable by humans and most other life forms. And yet Nordhaus thinks it would only reduce the global economy by just 8%?

Comically, Nordhaus’s damage function is symmetrical — it predicts the same damages from a fall in temperature as for an equivalent rise. It therefore predicts that a 6°C fall in global temperature would also reduce GGP by just 7.9% (see Figure 3). Unlike global warming, we do know what the world was like when the temperature was 6°C below 20th century levels: that was the average temperature of the planet during the last Ice Age (Tierney et al., 2020), which ended about 20,000 years ago. At the time, all of America north of New York, and of Europe north of Berlin, was beneath a kilometre of ice. The thought that a transition to such a climate in just over a century would cause global production to fall by less than 8% is laughable.

Again, I found myself in the position of a forensic detective, trying to work out how on Earth could otherwise intelligent people come to believe that climate change would only affect industries that are directly exposed to the weather, and that the correlation between climate today and economic output today across the globe could be used to predict the impact of global warming on the economy? The only explanation that made sense is that these economists were mistaking the weather for the climate. Ironically, given the calibre of Nordhaus’s later contributions, he gave a reasonable, if statistically-oriented, explanation of the difference between weather and climate in an early paper:

When we refer to climate, we usually are thinking of the average of characteristics of the atmosphere at different points of the earth, including the variances such as the diurnal and annual cycle. The important characteristics for man’s activities are temperature, precipitation, snow cover, winds and so forth. A more precise representation of the climate would be as a dynamic, stochastic system of equations. The probability distributions of the atmospheric characteristics is what we mean by climate, while a particular realization of that stochastic process is what we call the weather. (Nordhaus, 1976, p. 2)

This “probability distribution”, as we experience it at any given location on Earth, is affected by the amount of energy in the biosphere, which varies in three primary ways:

- Variations in the amount of the energy from the Sun that reaches the Earth;

- Variations in the amount of this energy retained by greenhouse gases; and

- Variations caused by differences in location on the planet — primarily, differences in distance from the Equator, altitude above sea level, and distance from oceans.

The first factor varies, via cyclical variations in the Earth’s orbit, over time measured in thousands of years, and via long-term trends in the Sun’s evolution, measured in billions of years. Neither of these are relevant in the timeframe of global warming. Given the Earth’s orbit, and how much its surface reflects solar radiation, then in the absence of the second factor — greenhouse gases — the average temperature of the atmosphere would be minus 18°C (Hay, 2014, p. 30, Galimov, 2017).

The second is what is at issue with global warming. If the Earth’s atmosphere captured and re-radiated all the infra-red energy that the planet’s surface reflects back into space (as a relatively dark body, the Earth absorbs high-frequency light and ultra-violet energy from the Sun, and reflects back low-frequency infra-red energy), then the average temperature of the atmosphere would be 29.5°C. With only naturally-occurring greenhouse gases, the average temperature of the planet at present would be 15°C. Human industrial activity is adding to this retention of solar energy, primarily by generating additional CO2 from burning fossil fuels.

The third, geographic factor is what is captured by the data shown in Figure 3 — and this has nothing to do with global warming. And yet this data, plus the belief that only industries which are exposed to the weather will be affected by global warming, is what underpins Nordhaus’s “damage function” (and similar constructs by his fellow Neoclassical climate change economists) with its trivial forecasts for economic damage from climate change.

One thing that Figure 3 does establish is that wide variations in temperature within one country today are associated with relatively small differences in income today. The range of average temperatures shown there is 16.8°C, from 4.7°C for North Dakota to 21.5°C for Florida. However, the two States had very similar Gross State Products per capita in 2000 ($26,700 versus $26,000). This cannot be used to argue that, therefore, a huge change in global average temperatures due to global warming will have only a small effect on income — but that is precisely how Neoclassical economists have used this data.

That is evident in their predictions, but it helps to also have verbal confirmation of the disconnect between what economists think of climate change, and what it really is. The following statements were made on Twitter by the prominent Neoclassical climate change economist Richard Tol. Tol was one of the two lead co-authors of the economic sections of the 2014 IPCC report on climate change (Arent et al., 2014a), the developer of FUND (“Climate Framework for Uncertainty, Negotiation and Distribution”), one of “Integrated Assessment models” (IAMs) used to supposedly estimate the impact of climate change on the economy, and editor of the academic journal Energy Economics. However, his arguments are those one would with associate with an ignorant troll, not an influential economist in the theory and practice of climate change.

In the first tweet, he concludes that climate change is not a problem, because US States with vastly different temperatures today have similar incomes today:

10K is less than the temperature distance between Alaska and Maryland (about equally rich), or between Iowa and Florida (about equally rich). Climate is not a primary driver of income. https://twitter.com/RichardTol/status/1140591420144869381?s=20

In the second, he concludes that global warming can’t be a problem, because it is expected to increase temperatures by a small amount compared to the daily temperature variation for any one location on Earth — which averages about 14°C for the continental USA (Qu et al., 2014):

People thrive in a wide range of climates. The projected climate change is small relative to the diurnal cycle. It is therefore rather peculiar to conclude that climate change will be disastrous. Those who claim so have been unable to explain why. https://twitter.com/RichardTol/status/1313182006310731776?s=20

My personal experience of responding to delusional beliefs like these reminds me of the aphorism widely attributed to George Bernard Shaw, that “he who wrestles with a hog must expect to be spattered with filth, whether he is vanquished or not”. In contrast, this is how a key scientific paper (Im et al., 2017`) summarised what a world 4°C warmer — let alone 10°C-14°C warmer — would mean for the over 2 billion human inhabitants of South Asia:

Human exposure to TW [wet bulb temperatures] of around 35°C for even a few hours will result in death even for the fittest of humans under shaded, well-ventilated conditions… TWmax is projected to exceed the survivability threshold … under the RCP8.5 scenario by the end of the century over most of South Asia, including the Ganges river valley, northeastern India, Bangladesh, the eastern coast of India, Chota Nagpur Plateau, northern Sri Lanka, and the Indus valley of Pakistan. (Im et al., 2017, p. 4)

The failure of peer review

There is one generic defence of the failure of the referees of economic journals to identify this work as effectively fraudulent. Academics are not paid to referee, and the time they are supposedly allotted to do refereeing has been largely eliminated by the efficiency drives that politicians and the managerial class of University administrators have forced upon them. So refereeing is not done as professionally as the image of “peer reviewed research” implies. Refereeing is also a far lower standard than replication. To referee a paper, all a referee has to do is read it and pass judgment. To replicate, you actually have to independently reproduce the results claimed in the paper.

That said, there is no excuse for referees approving for publication papers that, for example, make the critical and absurd assumption that 87% of GDP will be unaffected by climate change, because it happens indoors (Nordhaus, 1991, p. 930). Here, the guilty party is not Nordhaus alone, but the entire edifice of Neoclassical economics. Only Neoclassical economists, who in general have what Paul Romer described as a “noncommittal relationship with the truth” (Romer, 2016, p. 5), would recommend publication of papers that make critical assumptions that are so obviously false.

As I detail in Debunking Economics (Keen, 2011), Neoclassical economics is riddled with false assumptions, because numerous theoretical and empirical requirements of the underlying theory have been proven to be false. Rather than accepting that their initial beliefs were wrong, and then abandoning these beliefs to develop a richer, more complex theory, Neoclassical economists have clung to those beliefs by adding patently absurd assumptions to hide the contrary proofs.

This practice is defended by describing such assumptions as “simplifying”, but that a false description. A simplifying assumption is something that, if it is false, complicates the analysis a great deal, but changes the result only marginally. For example, Galileo’s demonstration that dense bodies fall at the same speed, regardless of their weight, effectively assumed no air resistance. Taking air resistance into account would have resulted in a vastly more complicated demonstration, but no significant change in the result.

On the other hand, the type of assumption that Neoclassical economists defend as “simplifying” is frequently critical to the conclusions drawn from the model: if the assumption is false, then so are the conclusions (Musgrave, 1990). Such assumptions abound in Neoclassical economics, so much so that economists have convinced themselves that it is invalid to criticise a theory on the grounds that its assumptions are unrealistic:

To put this point less paradoxically, the relevant question to ask about the “assumptions” of a theory is not whether they are descriptively “realistic”, for they never are, but whether they are sufficiently good approximations for the purpose in hand. And this question can be answered only by seeing whether the theory works, which means whether it yields sufficiently accurate predictions. (Friedman, 1953, p. 153)

This, as Alan Musgrave explained, is nonsense (Musgrave, 1981). However, because they accept Friedman’s dodgy methodology, Neoclassical referees regularly recommend the publication of papers in which assumptions are made that are patently false, if those papers support Neoclassical beliefs. To such referees, Nordhaus’s assumption that “87% [of United States national output is produced] in sectors that are negligibly affected by climate change” (Nordhaus, 1991, p. 930) was just a “simplifying assumption”, which could not be challenged.

This methodological fallacy is dangerous enough with standard economic issues. But with climate change, it is existentially so. When the theory is proven wrong by the failure of its predictions, the consequences of this failure will be both catastrophic and irreversible. As DeCanio eloquently put it, waiting until we know that Neoclassical economists are wrong on climate change “amounts to conducting a one-time, irreversible experiment of unknown outcome with the habitability of the entire planet” (DeCanio, 2003, p. 3).

Conclusion

Neoclassical economics has dominated economics for 150 years, which has given it the advantage of incumbency over its rivals — so much so that most people in authority, journalists, University students, and the general public, think that Neoclassical economics is economics. When it is criticised, even by other economists like myself (Keen, 2011), the public is unlikely to hear the criticisms in the first place, and likely to regard them as coming from cranks if they do. Q-Anon aside, we trust those ordained as experts in their own fields.

This trust is a characteristic of human society which is utterly justified in the complex societies in which we live. Deference to expertise is a necessary feature of life in a complex world. However, with economics, this justifiable deference has helped entrench a fundamentally unscientific school of thought, and has made progress in economics virtually impossible.

I believed, before the Global Financial Crisis, that the only way economics could be shifted would be by its failure to predict a serious economic crisis. But the transient nature of economic crises — especially in the face of governments determined not to let capitalism fail on their watch — meant that economic theory scraped through that crisis relatively unscathed. That may all change in the next decade, because our trust in expertise has meant that, though scientists led the study of climate change, we have let Neoclassical economists determine our policy response.

Most politicians have studied some economics. Few have studied science, and they are therefore unable to read the science-based parts of the IPCC Reports. Most of their advisers — who actually read the reports for the politicians — are also trained in economics, rather than the sciences. Most political debate is about matters of economics, rather than science. The end result of all this is that, though scientists have led the study of climate change itself, economists have dominated public policy towards it. As Stephen De Canio put it in 2003:

it is undeniably the case that economic arguments, claims, and calculations have been the dominant influence on the public political debate on climate policy in the United States and around the world… It is an open question whether the economic arguments were the cause or only an ex-post justification of the decisions made … but there is no doubt that economists have claimed that their calculations should dictate the proper course of action. (DeCanio, 2003, p. 4)

Because these economists, starting with William Nordhaus, trivialised the dangers of climate change, the policy response to climate change has also been trivial. Human civilisation may well not survive Neoclassical economics. It’s time it was eliminated, before it eliminates us.